Transfer Learning is a machine learning method where a pre-trained model is used as a starting point for a related task. It uses the knowledge of a previous model to solve a new, yet associated, problem, saving time and computing resources. During tech interviews, it’s used to assess the candidate’s understanding of machine learning models and their ability to leverage their knowledge transfer skills for efficient problem solutions. This blog post will explore interview questions and answers pertaining to the important concept of Transfer Learning.

Transfer Learning Fundamentals

- 1.

What is transfer learning and how does it differ from traditional machine learning?

Answer:Transfer Learning is an adaptive technique where knowledge gained from one task is utilized in a related, but distinct, target task. In contrast to traditional ML techniques, which are typically task-specific and learn from scratch, transfer learning expedite learning based on an existing, complementary task.

Key vs. target task distinction

Traditional ML: The algorithm starts with no information about the task at hand and learns from the provided labeled data.

Transfer Learning: The model uses insights from both the key and the target task, preventing overfitting and allowing for improved generalization.

Data requisites for each approach

Traditional ML: Essentially, a large and diverse dataset that’s labeled and completely representative of the task you want the model to learn.

Transfer Learning: This approach can operate under varying degrees of data constraints. For instance, you might only need limited labeled data from the target domain.

Training methods

Traditional ML: The model is initially provided with random parameter values, and learns to predict off the examined data through techniques like stochastic gradient descent.

Transfer Learning: The model typically begins with parameters that are generally useful or beneficial from the key task. These parameters are further fine-tuned with data from the target task and can also be frozen to restrict further modifications based on the key task.

Fitness for different use-cases

Traditional ML: Perfect for tasks where extensive labeled data from the target task is accessible and is highly typical.

Transfer Learning: Exceptional for situations with lesser labeled data, or when knowledge from the key task can significantly enhance learning on the target task.

- 2.

Can you explain the concept of domain and task in the context of transfer learning?

Answer:Domain Adaptation and Task Adaptation are prominent themes in Transfer Learning.

Domain Adaptation

When the source and target domains differ:

-

Covariate Shift: P(X) changes, leading to a mismatch in input feature distributions. This can be corrected using methods such as Maximum Mean Discrepancy (MMD) or Kernel Mean Matching (KMM).

-

Concept Shift: The conditional distribution P(Y|X) might change. Techniques like importance re-weighting and sample re-weighting may mitigate this.

Task Adaptation

In the task transfer scenario, the source and target tasks are related but not identical.

- Task Preservation: The model retains some relevant knowledge and expertise from the source task (or tasks) and uses it to enhance the performance on the target task.

For instance, when a model is trained on visual recognition tasks (ImageNet) and further fine-tuned for particular classes or for detection tasks, like object detection or semantic segmentation, we are witnessing task transfer.

In the context of Reinforcement Learning, task adaptation can be achieved through concepts such as:

- Multi-Task Learning, where a single agent learns to handle multiple tasks.

- Curriculum Learning, which presents the agent with tasks of increasing complexity.

-

- 3.

What are the benefits of using transfer learning techniques?

Answer:Transfer learning leverages existing knowledge from one task or domain to enhance learning and performance in another, often different, task or domain. This approach has garnered substantial positive attention for its various advantages.

Benefits of Transfer Learning

-

Reduction in Data Requirements: Especially advantageous when the target task has limited data available, transfer learning helps alleviate the burdens of extensive data collection and annotation.

-

Speed and Efficiency: Training on pre-trained models can be considerably faster, as they start with a set of optimized weights. This improved efficiency is especially beneficial in time-sensitive applications, such as real-time image recognition or online recommendation systems.

-

Improved Generalization: By initializing from a pre-trained model, the model is exposed to a broader and more diverse feature space, resulting in better generalization performance.

-

Address of Overfitting: When there’s a risk of overfitting due to a small dataset, transfer learning can aid in curbing this issue by starting from a pre-trained model and fine-tuning its parameters.

-

Performance Boost: Transfer learning regularly yields superior results, outperforming models trained from scratch, especially when the pre-trained model is from a related domain or task. For instance, a model pre-trained on a dataset of natural images might be more adept at features like edges and textures, attributes central to many computer vision tasks.

-

Insights from One Domain to Another: Transfer learning helps to propagate domain-specific or task-specific knowledge. For example, models pre-trained on languages can enrich the understanding of other languages, enabling tasks like translation and categorization of articles.

-

Need for Computational Resources: Training deep neural networks from scratch often demands extensive computational power. Transfer learning, in contrast, is less resource-intensive, making it more accessible to a wider audience.

-

Anchoring in Consistent Features: Pre-trained models distill robust, learned representations, which are then adaptable for more particular tasks. This consistency in foundational features can be remarkably advantageous.

-

Robustness Against Adversarial Attacks: Transfer learning can boost model resilience in the face of adversarial inputs, as the extracted features from pre-trained models often pertain to the wider context and are less susceptible to minute perturbations.

-

Guided Exploration: In complex model architectures, it can be perplexing to explore the weight space with random initialization. By starting with the knowledge imbibed by pre-trained models, this space can be navigated more efficiently and purposefully.

Contextual Applications

- NLP Domains: Transfer learning plays an integral role in fine-tuning models such as BERT for more specific NLP tasks.

- Computer Vision: Tasks like object detection and facial recognition have benefited significantly from pre-trained models like YOLO and OpenFace.

- Healthcare and Biotechnology: With the need for extensive and often sensitive datasets, the transfer learning approach has proven invaluable in tasks like medical image analysis and drug discovery.

-

- 4.

In which scenarios is transfer learning most effective?

Answer:Transfer learning is particularly powerful in settings that involve:

-

Limited Data: When the target task possesses modest training samples, reusing knowledge from a related source domain is invaluable.

-

Costly Data Collection: In real-world applications, acquiring labeled data can be time-consuming and expensive. Transfer learning reduces this burden by bootstrapping the model with pre-existing knowledge.

-

Model Generalization: Traditional models might overfit to small datasets, making them unreliable in new, unseen environments. Pre-trained models, by contrast, have been exposed to a diverse range of data, promoting generalization.

-

Model Training Efficiency: Training from scratch is computationally intensive. Using pre-trained models as a starting point accelerates the learning process.

-

Task Similarities: Transferring knowledge from a source domain that aligns with the target task can yield more substantial benefits.

-

Hierarchical Knowledge: Knowledge transfer is most potent when operating across levels of a knowledge hierarchy. For instance, understanding basic visual features can accelerate learning in tasks like object recognition.

-

Domain Adaptation: When the data distribution of the source and target domains diverge, transfer learning strategies can help bridge this gap for improved performance.

In alignment with these scenarios, techniques like feature extractor freezing, partial model fine-tuning, and domain adaptation tend to be especially effective.

-

- 5.

Describe the difference between transductive transfer learning and inductive transfer learning.

Answer:Transductive Transfer Learning involves target domain tasks where training data is augmented. It is a quick and cost-effective way to adapt to specific scenarios but doesn’t generalize well.

On the other hand, Inductive Transfer Learning is specifically designed to build models that excel at generalization across target tasks that were unseen during training, making it beneficial when large diverse datasets are available.

Transductive Transfer Learning

In transductive transfer learning, the goal is to improve the performance of a target task by leveraging knowledge from a related, but not strictly aligned, source task or domain.

Example

Consider an image classification task. You pretrain a model on a dataset of cat and dog images. However, the dataset does not include images of tigers. This model can still predict images of tigers reasonably well because the visual features of cats and dogs are shared with tigers.

Inductive Transfer Learning

Inductive Transfer Learning seeks to utilize knowledge from one or more \textit{source tasks or domains} to enhance the generalization of a target task, even when the target domain is different from the source domain or tasks.

Application in Text Classification

Assume you have a model trained to identify spam emails. You can use this model to bootstrap the training of a new model that distinguishes between different genres of writing, such as academic papers, legal documents, and regular emails. Even though the source and target tasks are vastly different, the previously learned information about language patterns can still be valuable.

Key Differentiators

- Data Usage: Inductive methods incorporate source data into the training process, whereas transductive methods do not.

- Generalization: Inductive methods aim for broad applicability across new target tasks or domains, prioritizing generalization. Transductive methods focus on optimizing performance for the specific target tasks or domains considered during training.

Practical Considerations

- Transductive Learning: Useful when you have limited or no access to target domain data, or when quick modifications for a specific target are required.

- Inductive Learning: Suitable when abundant target domain data is available, or when there’s a need for strong generalization properties for a wide range of potential target tasks or domains.

Best of Both Worlds: Semi-Supervised Learning

Semi-supervised learning aims to benefit from both labeled data, usually from the source domain, and unlabeled data from the target domain. By leveraging knowledge from both domains, it balances the advantages of transductive and inductive learning while potentially reducing the need for excessive manually labeled data.

- 6.

Explain the concept of ‘negative transfer’. When can it occur?

Answer:While Transfer Learning typically offers advantages, it does present some potential issues. One of these is the phenomenon known as negative transfer.

What Is Negative Transfer?

Negative Transfer occurs when knowledge or parameters from a source task hinder learning in the target task, rather than helping it.

When Does Negative Transfer Arise?

-

Dissimilarity Between Tasks: If the source and target tasks are quite different, the features and representations learned in the source might not align well with the target. As a result, the transferred knowledge can be detrimental instead of helpful.

-

Incompatible Feature Spaces: Learning happens in an embedded feature space. Negative Transfer can occur when the source and target feature spaces are not aligned.

-

Domain Shift: Mismatches between source and target datasets, such as different distributions or sampling biases can lead to negative transfer.

-

Task Ambiguity: If the source task is ambiguous or focuses on multiple objectives without clear hierarchy or structure, it might not provide the right guidance for the target task.

Strategies to Mitigate Negative Transfer

- Selective Learning: Limit the influence of the source domain on certain dimensions of the target domain.

- Data Augmentation: Expand the target domain dataset to bridge gaps between the source and target.

- Adaptive Methods: Utilize adaptive mechanisms to dynamically adjust the influence or relevance of the source domain during training in the target domain. For example, yosinski_2014.

- Progressive Mechanisms: Gradually introduce the source domain’s knowledge into the target domain’s learning process, which could alleviate some of the issues associated with negative transfer. An example would be multi-stage fine-tuning.

- Regularization Techniques: Implement methods like domain adversarial training or discrepancy-based losses to align the distributions of source and target domains, reducing the risk of negative transfer.

- Task-Aware Feature Alignment: Use techniques like task-aware adaptation or learning task-specific subspaces to ensure that transferred features assist the target task optimally.

-

- 7.

What role do pre-trained models play in transfer learning?

Answer:Pre-trained models are at the core of transfer learning, enabling neural networks to adapt quickly to new datasets and specific tasks.

Key Components of Pre-trained Models

-

Deep Learning Architectures: Pre-trained models come equipped with top-performing architectures, such as VGG, Inception, or ResNet. These networks are characterized by various depths, which consist of millions of parameters.

-

Learned Features: The models have visual or semantic features already learned from large-scale datasets like ImageNet.

-

Fixed or Fine-Tuned Layers: During transfer learning, layers are either kept constant or further trained on new data, as needed.

Training Speed and Data Efficiency Benefits

-

Parameter Warm-Starting: Initial weights, derived from pre-training, jump-start the training process, thereby reducing convergence time and challenges like vanishing gradients.

-

Elimination of Redundant Learning: When dealing with datasets of limited size, pre-trained models ensure that the network doesn’t redundantly learn generic patterns already captured in the pre-training phase.

Use-Case Flexibility

-

Broad Domain Competence: These models have been optimized for genericity, making them suitable for a range of tasks. However, you can still refine them for more specific scenarios.

-

Fine-Tuning Control: If fine-tuning isn’t required, you can utilize pre-trained layers as fixed feature extractors, saving computational resources.

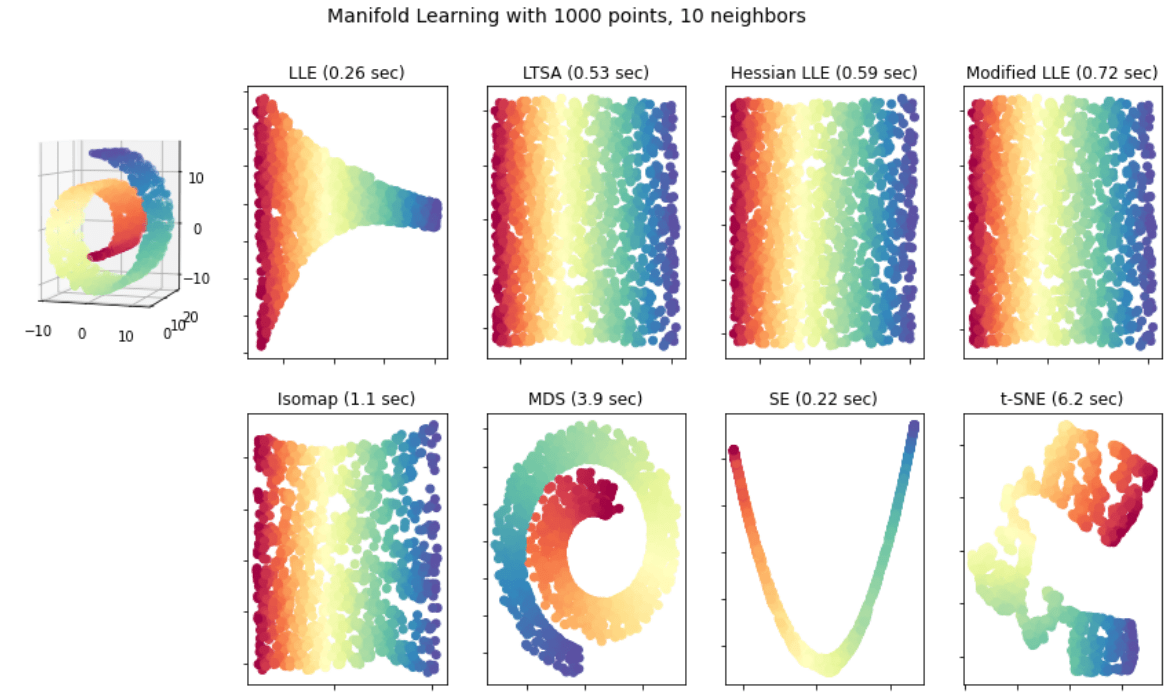

Visual Representation with Manifold Learning

-

Distribution Adaptation: The premise is to align different distributions, such as pre-training data and target data, in a shared feature space.

-

Learnable Features: Last-layer weights capture task-specific characteristics, superimposed on the more global knowledge represented by pre-trained weights.

Practical Implementation: The Keras Ecosystem

Here is the Keras code:

from keras.applications import VGG16 from keras import layers, models, optimizers # Load the pre-trained model base_model = VGG16(weights='imagenet', include_top=False, input_shape=(150,150,3)) # Add a custom classifier model = models.Sequential() model.add(base_model) model.add(layers.Flatten()) model.add(layers.Dense(256, activation='relu')) model.add(layers.Dense(1, activation='sigmoid')) # Freeze the base model's layers base_model.trainable = False # Compile the model and train model.compile(optimizer=optimizers.RMSprop(lr=2e-5), loss='binary_crossentropy', metrics=['accuracy']) model.fit(train_data, train_labels, epochs=5, batch_size=20)When incorporating pre-trained models through Keras, one can customize the fine-tuning process, as well as the learning rate for the trainable layers.

-

- 8.

How can transfer learning be deployed in small data scenarios?

Answer:While transfer learning is traditionally employed with large datasets, it is still beneficial in small data situations.

Challenges with Small Datasets

- High Dimensionality: Few data points in high-dimensional spaces make it challenging to identify patterns.

- Overfitting Risk: Models may memorize the training data rather than learn generalizable features.

- Bias Introduction: Learning from limited data may introduce a sampling bias.

Unique Benefits of Transfer Learning

- Feature Extraction: Leveraging pretrained models to extract relevant features.

- Fine-Tuning: Adapting specific model layers to the task at hand.

- Data Augmentation: Combining existing and limited data with augmented samples.

- Regularization: Merging small dataset techniques with the regularization capabilities of large models.

Practical Strategies for Small Datasets

- Utilize Pretrained Models: Select a model trained on similar data to extract abstract features.

- Apply Layer-Freezing: Keep early layers static when using a pre-trained model.

- Adopt Data Augmentation: Boost the small dataset with artificially generated samples.

- Incorporate Domain Knowledge: Leverage task-specific knowledge and available features.

- Employ Ensembles: Combine multiple models to enhance predictions.

- Leverage Synthetic Data: Generate data that software or simulated systems can provide.

- Use Embeddings: Represent entities such as words or sentences in a lower-dimensional space.

Code Example: Data Augmentation

Here is the Python code:

from tensorflow.keras.preprocessing.image import ImageDataGenerator # Set up data generators train_datagen = ImageDataGenerator( rescale=1./255, rotation_range=40, width_shift_range=0.2, height_shift_range=0.2, shear_range=0.2, zoom_range=0.2, horizontal_flip=True, fill_mode='nearest' ) test_datagen = ImageDataGenerator(rescale=1./255) # Flow training images in batches of 32 using train_datagen generator train_generator = train_datagen.flow_from_directory( train_dir, # This is the source directory for training images target_size=(150, 150), # All images will be resized to 150x150 batch_size=32, class_mode='binary') # Flow validation images in batches of 32 using test_datagen generator validation_generator = test_datagen.flow_from_directory( validation_dir, target_size=(150, 150), batch_size=32, class_mode='binary')

Techniques and Approaches

- 9.

What are feature extractors in the context of transfer learning?

Answer:In the context of transfer learning, a feature extractor serves as a pre-trained model’s component that is employed to manually extract features from specific layers.

Types of Feature Extractors

-

Shallow Extractors: Utilize features from the early layers of the pre-trained models. Faster but may not capture complex characteristics.

-

Deep Extractors: Operate on features from the deeper layers, often yielding superior discriminatory capabilities. Slower than shallow extractors but can detect intricate patterns.

-

Multi-Layer Extractors: Blend features from multiple levels, combining the strengths of both shallow and deep extractors.

Advantages of Feature Extractors

-

Lightweight Transfer: Suitable when the full pre-trained model is unwieldy, allowing for more efficient knowledge transfer.

-

Flexible Feature Selection: Provides adaptability in selecting the layers that best suit the task at hand.

Use-Cases

-

Visual Recognition: Applied in techniques like bag-of-words and SIFT for object detection and classification.

-

Text Analytics: Leverages strategies such as TF-IDF and semantic word embeddings for sentiment analysis and document clustering.

-

Signal Processing: Utilizes raw waveform or spectrogram features for audio or time-series analysis.

-

Variational Data Streams: Addresses continuous learning scenarios where data shifts over time.

-

Multi-Modal Learning: Involves combining information from varied sources such as text and images.

When to Use Feature Extractors

-

Limited Dataset Size: Aids in training with restricted data availability.

-

Task Specificity: Valuable when engaging in a specialized task that deviates from the pre-trained model’s original purpose.

Code Example: Using a Pre-Trained CNN for Feature Extraction

Here is the Python code:

import numpy as np import tensorflow as tf from tensorflow.keras.applications import VGG16 from tensorflow.keras.applications.vgg16 import preprocess_input # Load pre-trained model model = VGG16(weights='imagenet', include_top=False) # Load and preprocess data img_path = 'path_to_image.jpg' img = tf.keras.preprocessing.image.load_img(img_path, target_size=(224, 224)) img_array = tf.keras.preprocessing.image.img_to_array(img) img_array = np.expand_dims(img_array, axis=0) img_array = preprocess_input(img_array) # Extract features features = model.predict(img_array) # Verify output shape print(features.shape) -

- 10.

Describe the process of fine-tuning a pre-trained neural network.

Answer:Fine-tuning in machine learning typically refers to updating or retraining specific parts of a pre-trained model to suit a new or more specific task. This often helps leverage both the generality of pre-existing knowledge and the nuances required for the specific task.

Process Step by Step

-

Pre-Training

This initial stage involves training a neural network on a large, diverse dataset such as ImageNet for an image classifier, or Wikipedia for a text-based model.

-

Data Collection

Gather a new dataset specific to your task. This step is often referred to as task-specific data collection.

-

Task Pre-Training

Train your network on the task-specific dataset to lay the groundwork for fine-tuning. This usually involves freezing the majority of the model’s layers.

- Freeze: What it does is to determine during training, whether or not a layer’s parameters will be updated. It means that the backpropagation process does not compute the gradients with respect to the preserved layers’ parameters.

-

Fine-tuning

This stage involves unfreezing certain layers in the model and further training it using both the pre-training and task-specific datasets.

- Unfreeze: This action is to modify the parameter update rule for a given set of layers. It allows backpropagation to compute gradients with respect to their parameters and performs updates to these parameters during optimization.

-

Validation

Evaluate your fine-tuned model to ensure it meets the desired accuracy and performance metrics, potentially adjusting fine-tuning hyperparameters as needed.

Layer Freezing Mechanism

During step 3, where the bulk of the transfer learning preparation occurs, you’ll want to freeze certain layers. This means those layers’ weights are not updated during backpropagation.

The reasons to freeze these layers, as well as the depth in the model to freeze, are manifold:

- These pre-trained lower layers are typically generic feature extractors such as edge or texture detectors, which are generally useful for a wide array of tasks.

- The deeper you go into the network, the more abstract and task-agnostic the features become.

A general guideline is to freeze early layers for tasks where the input data is similar to the original domain, allowing the model to learn newer and more task-specific features.

Conversely, unfreezing earlier layers might benefit fields where the learned features are more generalizable.

Implementation Example

Here is Python code:

import tensorflow as tf import tensorflow_hub as hub # Load the pre-trained module module_url = "https://tfhub.dev/google/tf2-preview/mobilenet_v2/feature_vector/4" module = hub.KerasLayer(module_url) # Define a new, task-specific model model = tf.keras.Sequential([ module, tf.keras.layers.Dense(1, activation='sigmoid') ]) # Compile the model model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Train on the task-specific data model.fit(task_data, epochs=5) # Now, unfreeze the base model module.trainable = True # Re-compile the model to set the unfreezing into effect model.compile(loss='binary_crossentropy', optimizer='adam', metrics=['accuracy']) # Perform fine-tuning using both pre-training and task-specific data model.fit(fine_tune_data, epochs=5) -

- 11.

What is one-shot learning and how does it relate to transfer learning?

Answer:One-shot learning is a machine learning paradigm where the model makes accurate predictions after seeing just one example of each class. It has clear associations and differences when compared to Transfer Learning.

One-Shot Learning

With one-shot learning, a model can generalize from a single sample. This capability is essential in scenarios where collecting labeled data for each class is impractical or costly. However, one-shot learning typically requires highly specialized algorithms and high computational power to achieve reliable results.

Transfer Learning

Transfer learning is a technique that leverages representations learned from one task for use on a related, but different task. It is a common approach in machine learning, especially for deep learning, to address data scarcity and compute limitations.

In transfer learning, a model:

- Is initially trained on a large, diverse dataset for a related task (source domain).

- Then, this pre-trained model, or just its features, is further trained or used as a feature extractor for a smaller, specialized dataset for the target task (target domain).

Comparatively, transfer learning:

- Learns from multiple examples during the source domain training.

- Might still require several examples in the target domain for fine-tuning.

- Strives to identify generalizable features, optimizing for task performance.

Capabilities of Combined Approaches

While both techniques have distinct strengths and weaknesses, their synergy can lead to robust and practical solutions.

One-shot learning can benefit from features refined by transfer learning, potentially reducing its dependence on ultra-specialized algorithms and enabling its broader adoption.

Conversely, transfer learning can become more effective, especially when examples in the target domain are sparse, by integrating the rich, context-aware representations learned from the one-shot strategy.

- 12.

Explain the differences between few-shot learning and zero-shot learning.

Answer:While both Zero-Shot Learning (ZSL) and Few-Shot Learning (FSL) stem from the broader area of Transfer Learning, they have distinct characteristics in terms of learning setups, data requirements, and model flexibility.

Core Distinctions

Learning Setup

-

Zero-Shot Learning: Trains models on two disjoint sets: labeled data for known classes and textual or other external data describing the unseen classes. The goal is to predict these unseen classes with no direct examples.

-

Few-Shot Learning: Typically, models are trained and tested using a single dataset, but the testing phase involves unseen classes with very few examples, sometimes as limited as one or two.

Data Characteristics

-

Zero-Shot Learning: Utilizes labeled data for known classes and additional information or attributes, such as textual descriptions or visual characteristics, for unseen classes.

-

Few-Shot Learning: Requires even fewer examples for each unseen class, generally ranging from one to few examples.

Model Flexibility

-

Zero-Shot Learning: Models need to learn the relationship between the visual features and the external knowledge, often represented as semantic embeddings or textual descriptions.

-

Few-Shot Learning: The focus is on leveraging a model’s ability to generalize from limited examples, often through techniques like data augmentation or meta-learning.

Evaluation Metrics

-

Zero-Shot Learning: Typically evaluated using top-K accuracy, where the task is to correctly identify the unseen class within the top-K candidate predictions.

-

Few-Shot Learning: Utilizes more traditional metrics like accuracy, precision, recall, and F1 score.

Key Takeaways

While both strategies are designed to alleviate the need for considerable labeled data, their specific requirements and goals are distinct. Zero-Shot Learning famously targets the task of recognizing objects or concepts with no prior examples, like identifying exotic animals from mere textual descriptions.

On the other hand, Few-Shot Learning aims to fortify a model’s capability to grasp novel concepts with minimal visual evidence, similar to asking a child to recognize a new breed of cat after showing them just a single image.

The intersection of these techniques within transfer learning cements their substantial impact on modern machine learning paradigms, especially in scenarios where extensive labeled data isn’t easily obtainable.

-

- 13.

How do multi-task learning and transfer learning compare?

Answer:Both multi-task learning (MTL) and transfer learning (TL) are strategies that allow models to benefit from knowledge gained on related tasks or domains.

Key Distinctions

Knowledge Sharing

- MTL: Simultaneously trains on multiple tasks, leveraging shared knowledge to improve predictions across all tasks.

- TL: Trains on a source task before using that knowledge to enhance performance on a target task or domain.

Data Requirements

- MTL: Requires joint datasets covering all tasks to identify and exploit their correlations.

- TL: Often works with larger, pre-existing datasets for the source task, and a target task dataset.

Objective Functions

- MTL: Utilizes a combined objective function that considers all tasks, optimizing for global error minimization.

- TL: Initially optimizes for the source task, then fine-tunes or adapts to optimize for the target task.

Model Flexibility

- MTL: Can build task-specific and shared layers within the same model architecture.

- TL: Typically employs one or more distinct strategies like feature extraction or fine-tuning, often relying on pre-trained models.

Computation Efficiency

- MTL: May require more computational resources to optimize multiple objectives and update the model across tasks simultaneously.

- TL: After the initial training on the source task, adaptation to the target task can be computationally more efficient, especially in the case of fine-tuning.

Core Concepts

- Shared Knowledge: Both MTL and TL aim to capitalize on shared structure and insights across tasks or domains.

- Generalization and Adaptation: TL emphasizes adapting previously learned representations to new tasks, while MTL focuses on improved generalization by jointly learning.

- Error Minimization: While MTL focuses on minimizing a joint objective across tasks, TL aims to reduce task-specific or domain-specific errors.

Synergistic Approach

TL makes an excellent starting point for models, often leveraging vast datasets to provide generic expertise. MTL can then complement this by refining this expertise for specific applications or tasks, resulting in the best of both worlds.

- 14.

Discuss the concept of self-taught learning within transfer learning.

Answer:Self-taught Learning is a mechanism central to transferring knowledge from a source task (for which labeled data is available) to a target task (for which labeled data is limited or unavailable).

By enabling the neural network to learn to recognize features or patterns through unsupervised learning, it can adapt to new tasks more effectively.

Self-Taught Learning, Autoencoders, and Pre-Training

A traditional approach for self-taught learning involves using autoencoders to pre-train a neural network on the source data. Once trained, the encoder part of the autoencoder is used as the feature extractor for the target task, often with additional supervised training, also known as fine-tuning.

-

Autoencoders: A neural network is trained to map the input data to a lower-dimensional latent space and then reconstruct the original input from this representation. By learning an efficient coding of the input, an autoencoder can be used to extract task-relevant information.

Training: Unlabeled data is used for training to ensure the network learns a robust representation of the data’s structure.

-

Fine-Tuning: The trained autoencoder, which has learned a representation of the source data, is used as a starting point for the neural network of the target task. The parameters are then further adjusted through the use of labeled data in the target task.

Adapting to Unique Data Distributions

Self-Taught Learning approaches, particularly those employing autoencoders, have been beneficial in domains where labeled data is scarce or costly to obtain.

This transfer mechanism is particularly advantageous when:

- Domain Shifts are present between source and target tasks, meaning the data distributions differ.

- The nature of the target task or the environment it operates in makes obtaining labeled data challenging.

- There are legal or ethical constraints associated with obtaining labeled data for the target task, but unlabeled data is permissible.

- The objective is to reduce the amount of labeled data required for the target task, thereby saving resources.

- The method of transferring knowledge is expected to enhance performance on the target task.

Practical Example: Image Classification

Consider a scenario where a company has amassed a vast library of images for one specific purpose, say classifying vehicles, such as cars, trucks, and motorcycles.

Now, the team wants to expand its scope and develop an image recognition system for wildlife conservation. However, obtaining labeled images of animals in their natural habitat might be costly, impractical, or involve ethical considerations.

Here, using a self-taught learning mechanism, such as an autoencoder, on the pool of labeled vehicle images can extract generalized visual features. Then, these features can be used to pre-train a neural network that is further fine-tuned on a smaller set of labeled wildlife images, making the proposed system a great fit for conserving endangered species.

This approach enables the company to leverage the vast amount of labeled vehicle images and create a model capable of identifying animals with high accuracy, despite minimal labeled data available for wildlife images.

-

Practical Implementation

- 15.

What are the common pre-trained models available for use in transfer learning?

Answer:Numerous pre-trained models are available for tasks like image classification, object detection, and natural language processing. Here are some of the popular ones adapted to different scales and specializations:

Image Classifiers

AlexNet

- Training Dataset: 1.2 million images from 1,000 object categories.

- Accuracy: Top-1: 57.2% - Top-5: 80.0% ILSVRC 2012.

- Key Points: Debuting deep learning model in the ImageNet Large Scale Visual Recognition Challenge 2012.

VGG

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Top-1: 71% - Top-5: 89%.

- Key Points: Known for its simplicity and tight stacking of convolutional layers, paving the way for deeper architectures.

ResNet

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Winner of ILSVRC 2015 with top-5 error rate of 3.57%, more accurate as the depth increases.

- Key Points: Leverages residual blocks to address the vanishing gradient problem, enabling training of very deep networks (up to 152 layers).

Inception (GoogLeNet)

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Top-5 error rate of 6.67%.

- Key Points: Renowned for its inception modules with parallel convolutions of different receptive field sizes.

DenseNet

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Top-1: 73.9% - Top-5: 91.0%.

- Key Points: Every layer is directly connected to every other layer in a feed-forward fashion, promoting feature reuse.

MobileNet

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Not as accurate as other architectures due to its focus on reduced model size and efficiency.

- Key Points: Notable for depth-wise separable convolutions, ideal for smartphone and edge deployments.

NASNet

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Built using neural architecture search and is compatible with the top-1 and top-5 error rates in ImageNet.

EfficientNet

- Training Dataset: ImageNet, standard 1,000-category version.

- Accuracy: Utilizes novel model scaling methods, offering state-of-the-art performance per size on ImageNet when compared to other architectures.